PTerm now supports Pharo 9 and Pharo 10

PTerm, the (only) terminal emulator for Pharo has been initially developed and maintained on Pharo 7. Due to some major API changes, PTerm was not compatible with higher version of Pharo such as 9 and 10. Porting it to Pharo 9 and 10 was long time in my to do list, but i didn't have spare time for such task. Fortunately, with the help of Kris, after many issues and pull requests, PTerm finally works on Pharo 9 and 10.

Web based VNC client with AntOS docker image

Long time ago i developed WVNC, a simple protocol based on Web socket that allows to connect to VNC server from browser. WVNC consist of

- A server side plugin (for Antd web server) that (1) acts as a bridge between VNC server and the web server; (2) defines the base protocol on top of websocket for streaming screen data and events to client. JPEG is used as data compression to reduce stream bandwidth.

- A client side API called wvnc.js that implements the protocol defined by the server plugin, decodes JPEG data from the server and renders the data on HTML5 canvas

The protocol works really well for my personal need as i used it on a daily basis to access to my VNC home server from work :), using nothing but the web browser. However, setting up WVNC from scratch is not a trivial task, as it depends on my Antd server which is not a popular web server, thus Stack overflow is not an option :). I've received many contacts from readers on the howto instruction.

As WVNC is finally a part of AntOS eco-system, and AntOS is available as an all-in-one docker image, anyone can now easily run their own web-base VNC client via a single command line without the headache of building every thing from scratch.

CI & automation: Multi-architecture build of software with Jenkins and Docker

Multi-arch software building and distribution is a complex and time-consuming maintenance task in which maintainer/developer need to compile somehow their software for all supported architectures, often, on a single machine. Automation solution such as Jenkins allows to automatize this task and facilitating continuous integration and continuous delivery. Multi-arch software building on a single machine involves the use of different techniques:

- Cross-build: using a cross-toochain to build the software for each target system. This solution is simple but with the cost of polluting the host system with many toolchains which maybe incompatible one to another. Maintenance and update of these toolchains are complicated to manage.

- Virtualization solutions such as docker address these problem by using a sandboxed/containerized image with all tools necessary to build the software for each target architecture. Docker facilitate the setup, maintenance and update of different building environments using images.

This post introduces the basic steps of setting up an automation server that allows to build and distribute multi-arch software using Jenkins and docker.

Monitoring and collecting syslog messages from Unix Domain Socket

Application log is the traditional way to monitor an application/service. On *nix-based system, Syslog is a common but powerful tool for centrally monitoring applications logs. The primary use of syslog is for system management as capturing log data is critical for sysadmin, devOp team, or system analysts, etc. This log data is helpful in case of investigating/troubleshooting problems and maintaining healthy functioning of systems.

Syslog offers a standard log format and a standard alert system with different severity levels to applications in form of a log API. Log daemons such as rsyslog are versatile and flexible with various configuration options that enable different way to interact with the logs: log to file, log to a remote server via network (TCP, UDP sockets), log to local Unix domain socket. Log clients or log analytic applications can collect log data from the log daemon via these interfaces.

Although it is feasible to directly read log messages from the regular syslog output files, it is more preferable to collect log data from the daemon using the socket interface since socket is more suitable for data streaming. TCP/UDP sockets can be used to access log data from the network (TCP/IP). But if the application runs locally on the same machine as the log daemon, Unix domain socket (UDS) may be the best option.

Unix Domain Socket is an inter-process communication mechanism that allows bidirectional data exchange between processes running on the same machine. Thus, UDSs can avoid some checks and operations (like routing); which makes them faster and lighter than IP sockets.

In this post, we will learn how to collect log data from syslog via UDS in C. We will use rsyslog as log daemon in this post.

A use case will be presented at the end of the post.

AntOS v1.2.0-beta release

After a long testing period, AntOS v1.2.0-beta is now released!!!

Change logs

- Improvement GUI API

- [x] File dialog should remember last opened folder

- [x] Add dynamic key-value dialog that work on any object

- [x] Window list panel should show window title in tooltip when mouse hovering on an application icon

- [x] Allow pinning application to system panel

- [x] Improvement application list in market place

- [x] Allow triplet keyboard shortcut in GUI

- [x] CodePad allows setting shortcut in CommandPalette commands

- [x] CodePad should have recent menu entry that remember top n file opened

- [x] Improve File application grid view

- [x] Label text should be selectable

- [x] switch window using shortcut (CTRL+ALT+1, CTRL+ALT+2)

- [x] Loading bar animation on system panel

- [x] Multiple file upload support

- [x] Generic key-value dialog

- [x] Add bootstrap font support for icons

- [x] Classify applications by categories in start menu

- [x] Support vertical and horizontal resize window

- MarketPlace now classifies application by categories

- CodePad is no longer default system application, it has been moved to MarketPlace

- More applications added to MarketPlace

- Antos SDK

- SDK is no longer included in Antos base release, it can be installed via MarketPlace

- The SDK now has a generic API that can be used in different development tasks other than AntOS application

- Heavy SDK tasks are now offloaded to workers

- Introduce new JSON based syntax for SDK task/target definition

- From this version, docker image of All-in-one AntOS system is available at: https://hub.docker.com/r/xsangle/antosaio

Demo

A demo of the VDE is available at https://app.iohub.dev/antos/ using username: demo and password: demo.

If one want to run AntOS VDE locally in their system, a docker image is available at:

https://hub.docker.com/r/xsangle/antosaio/

AntOS applications (Available on the MarketPlace)

https://github.com/lxsang/antosdk-apps

Documentation

- API: https://doc.iohub.dev/antos/api/

- Documentation: https://doc.iohub.dev/antos (outdated, WIP)

Data visualization: global view of blog posts relationship

As stated on a post where i talked about using tf-idf to detect similarity between two blog posts, my blog is just a bunch of posts sorted by date, no category, no fancy features like user interest tracking, post ranking, etc. I usually work on many different domains (robotic, IoT, backend, frontend platform design, etc.), so my posts are mixed up between these domains. This may be difficult for readers who want to follow up their interesting topic on my blog.

So what is a good strategy for navigating between posts on a blog ?

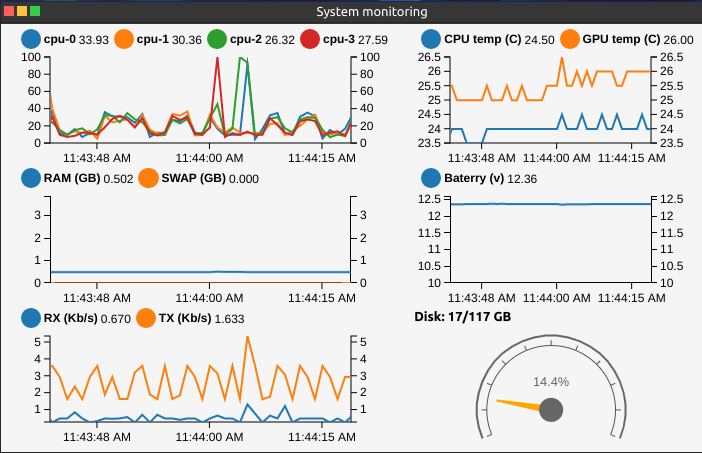

sysmond: Simple service for (embedded) Linux system monitoring

Working on my DIY robot software (Jarvis) in headless mode, i came across a situation where i needed to monitor the system resource such as CPU, battery, memory, network and temperature to measure the "greedy" of my robotic application. Furthermore, as the robot was battery powered, battery safety was a real concern, so i needed something to monitor the battery and shutdown the system when the battery was low to protect it from falling bellow the usable voltage range.

So i've searched for an application/service that allows me to:

- Monitor system memory, CPU, storage usage and temperature

- Monitor network consumption

- Monitor the robot battery and power off the system if the battery is low

None of existing applications/services satisfy all of these requirements, especially, the battery monitoring feature. So i've decided to write a small service that i called sysmond.

sysmond is a simple service that monitors and collects system information such as battery, temperature, memory, CPU, and network usage. The service can be used as backend for applications that need to consult system status. Although it is a part of Jarvis ecosystem, sysmond is a generic service and can be easily adapted to other use cases.

Example of AntOS web application that fetches data from sysmond and visualize it as real-time graphs on my Jarvis robot system. Detail on the use case can be found here

Sysmond monitors resource available on the system via the user space sysfs interface provided by the linux kernel.

Jarvis Arduino firmware

This post is the follow up post of the previous JETTY: Jarvis Serial to ROS-2 Transport Layer post.

The arduino firmware on the one hand implements the JETTY protocol for communicating with ROS and on the other hand takes care of all low-level hardware communication including:

- Reading of battery voltage from the ADS1115 16-Bit ADC sensor

- Reading of 9DOF IMU sensor data from MPU 9250 IMU sensor

- Reading of raw odometry data from two motor magnetic encoders

- Control two motor via Adafruit Motor Shield V2

The Jarvis booklet section Arduino Firmware presents the insight detail on the firmware implementation. It covers the following topics:

- The JETTY protocol implementation on Arduino

- Different routines implemented on the firmware

- Odometry data reading from hall effect sensor

- A strategy for battery voltage reading and monitoring

- Motors controlling

Follow up reading at: https://doc.iohub.dev/jarvis/Ym9vazovLy9jXzIvc18yL0lOVFJPLm1k/Arduino_Firmware.md

JETTY: Jarvis Serial to ROS-2 Transport Layer

My ROS based DIY robot( presented in the previous post) uses the NVIDIA Jetson Nano for high level robotic algorithms with the ROS 2 middle-ware. The Jetson is connected to an Arduino via a serial link for low-level hardware interaction and control.

As the Arduino is used for low-level communication with actuators/sensors. We need a software transport layer on top of the physical serial link (Jetson - Arduino) to stream (sensor) data/command from Arduino to ROS 2 and vice versa. On Dolly (my previous robot version), which used ROS 1, this was handled by Rosserial, a protocol for wrapping standard ROS serialized messages and multiplexing multiple topics and services over a serial link. On ROS 2, however, Rosserial is not available. Other alternative solutions exist but are not mature enough, some implementations require more computational resource which exceeds the capability of the Arduino Mega 2560.

So i decided to implement a dedicated transport layer for Jarvis called JETTY (Jarvis SErial to ROS-2 TransporT LaYer). I do not aim at a generic protocol for ROS to serial communication like ROS serial. Instead, the implementation of the transport layer should be specific only to the robot. However, the protocol must be easy to extend to adapt to any future upgrade of the robot such as adding more sensor/actuators.

Requirements on the transport layer:

- The transport layer must allow to stream data in form of frames (fixed size or not)

- Simple but reliable, unambiguous packet framing protocol, frame should be easy to identify

- Fast frame synchronization: When an endpoint (Arduino or ROS) connects to the Serial link in the middle of the data streaming, frame synchronization should be fast while minimizing the frames lost in the synchronization phase

- Frame should be verified using checksum before being consumed by an endpoint

- Packet framing overhead is allowed but need to be minimized

- The algorithms should be easy to implement and computationally inexpensive on both Jetson and Arduino

Brief, we need an efficient and reliable delimiting/synchronization scheme to detect the frame with short recovery time.

The detail on the choice of protocol and algorithm as well as insight implementation is presented on a section of my Jarvis booklet accessible via the following link:

Jarvis: The DIY robot

It has been a while since i started to build an upgraded version of Dolly, my first DIY ROS based robot. This upgraded version is named Jarvis.

Changes from the previous version:

- Hardware:

- The robot is now use tracks instead of wheels

- Jarvis footprint is bigger and has more room to mount addition components

- Jarvis uses NVIDIA Jetson Nano as high-level control board instead of Raspberry 3B (used in Dolly). The Jetson board has more GPU and processing power, and is suitable for machine learning stuffs (with the camera).

- Jarvis uses step-down voltage regulator instead of step-up regulator (Dolly), the regulator provide more juice (up to 3A for each output)

- 128 GB USB based SSD for operating system and storage instead of SD card

- Software:

- Linux Ubuntu 20.04

- ROS 2 is used instead of ROS (on Dolly)

As a work in progress, I'm now writing a booklet that detail the building process of the robot both on hardware and soft software (Arduino, ROS 2), as well as some application use cases. The initial plan is:

- Introduction

- Robot modeling and simulating with ROS 2 and Gazebo

- Building the robot hardware step by step

- Basic robot controlling software with ROS 2

- Use case projects: such as localisation and mapping, autonomous navigation, obstacle avoidance with machine learning, etc.

All further updates on the booklet can be found here: https://doc.iohub.dev/jarvis/.

Stay tunned!!!